Hierarchical Attention Network

Preface: The reason why I decide to reproduce this paper in 2016 is that this paper is the basis of the model in vista-net (2019 AAAI paper), you can also find the model introduce in my blog here. Still this model contains

Hierarchical structurewhich is commonly used in Multimodal scope, as well asAttention Mechanism.- You can find My Implement here , it is not flawless now.(may contains bugs), I will continually fix them then.

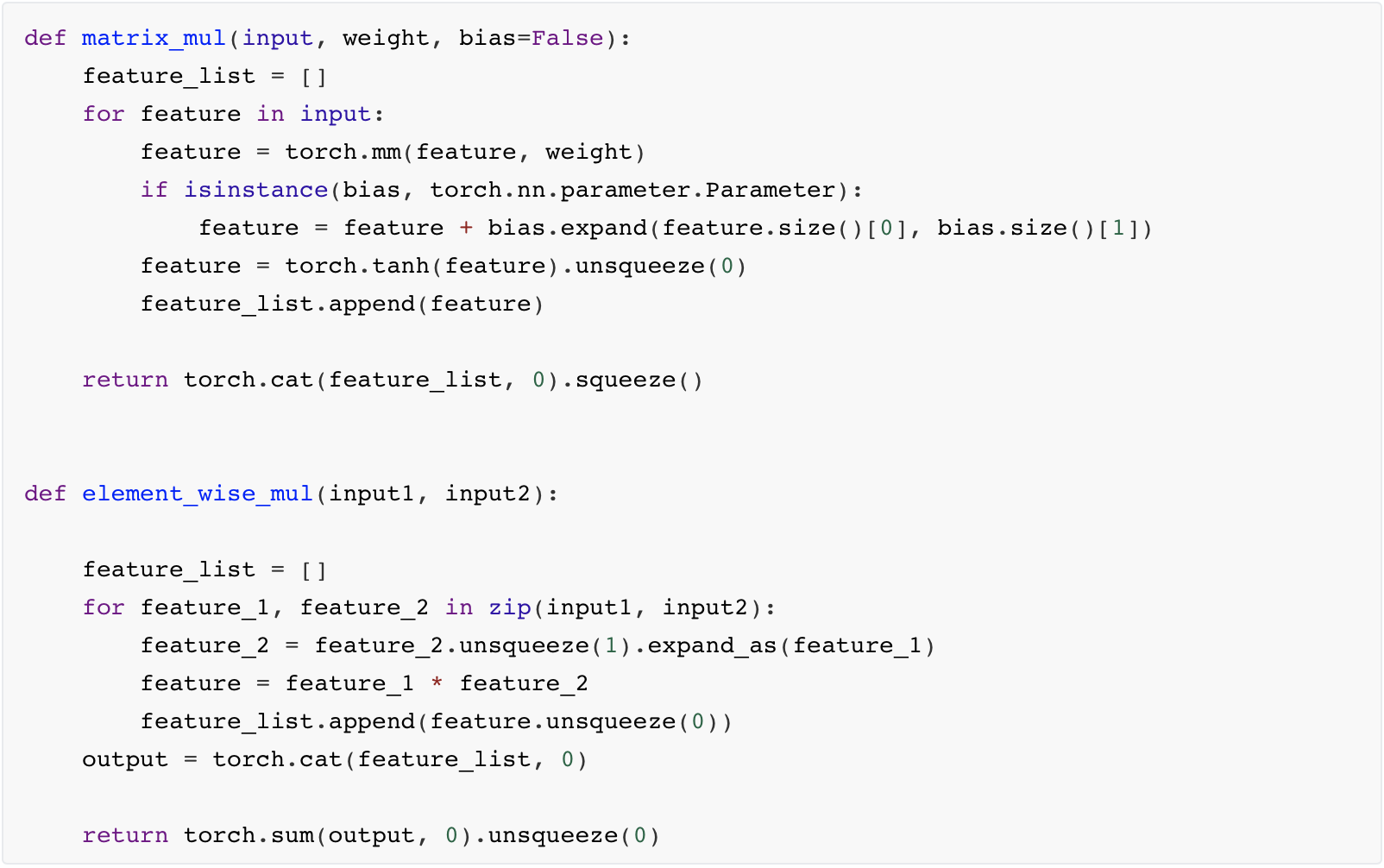

(0)Utils for Attention

We will use the following two method to conduct attention function, (used both in word & sentence level)

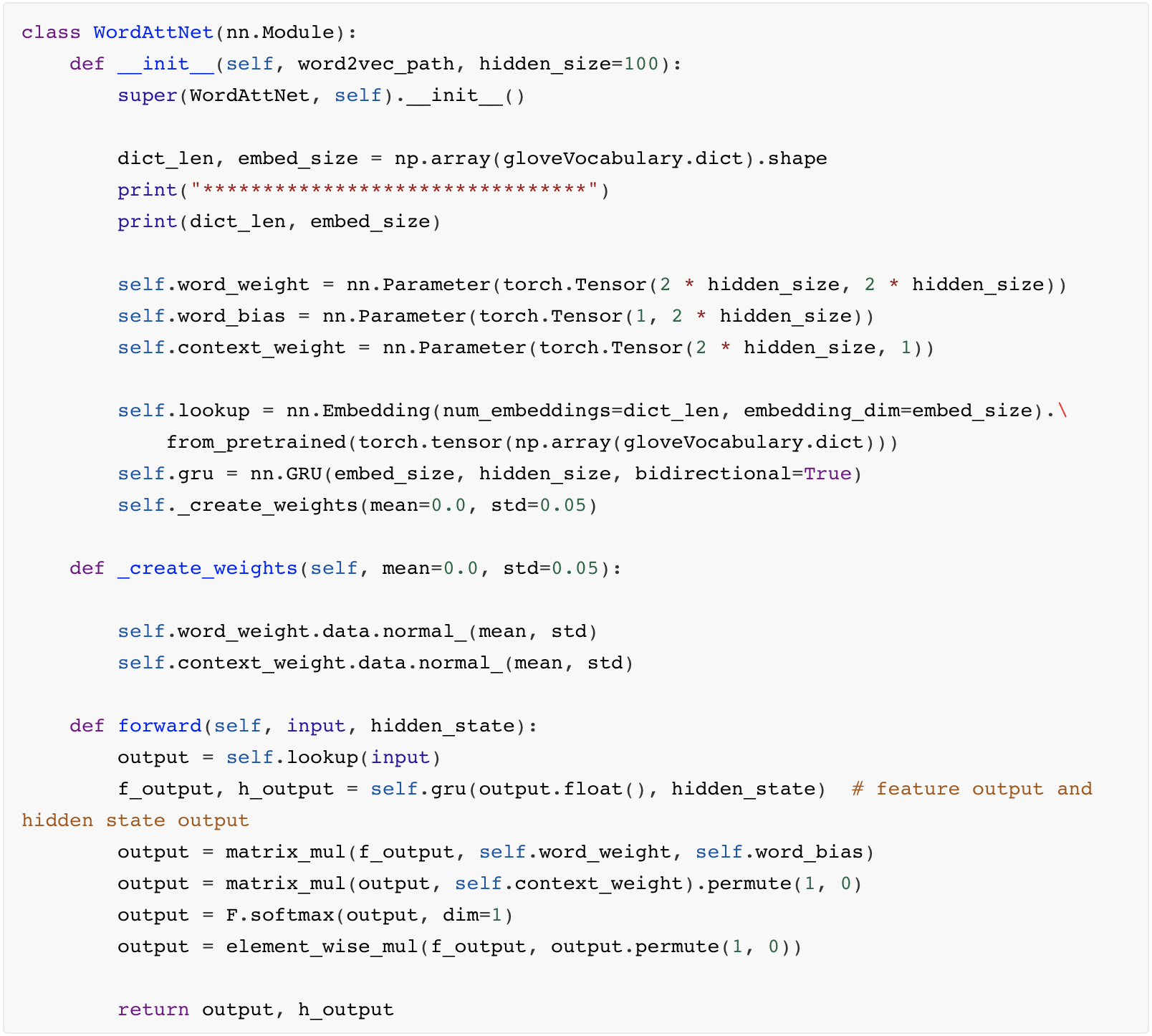

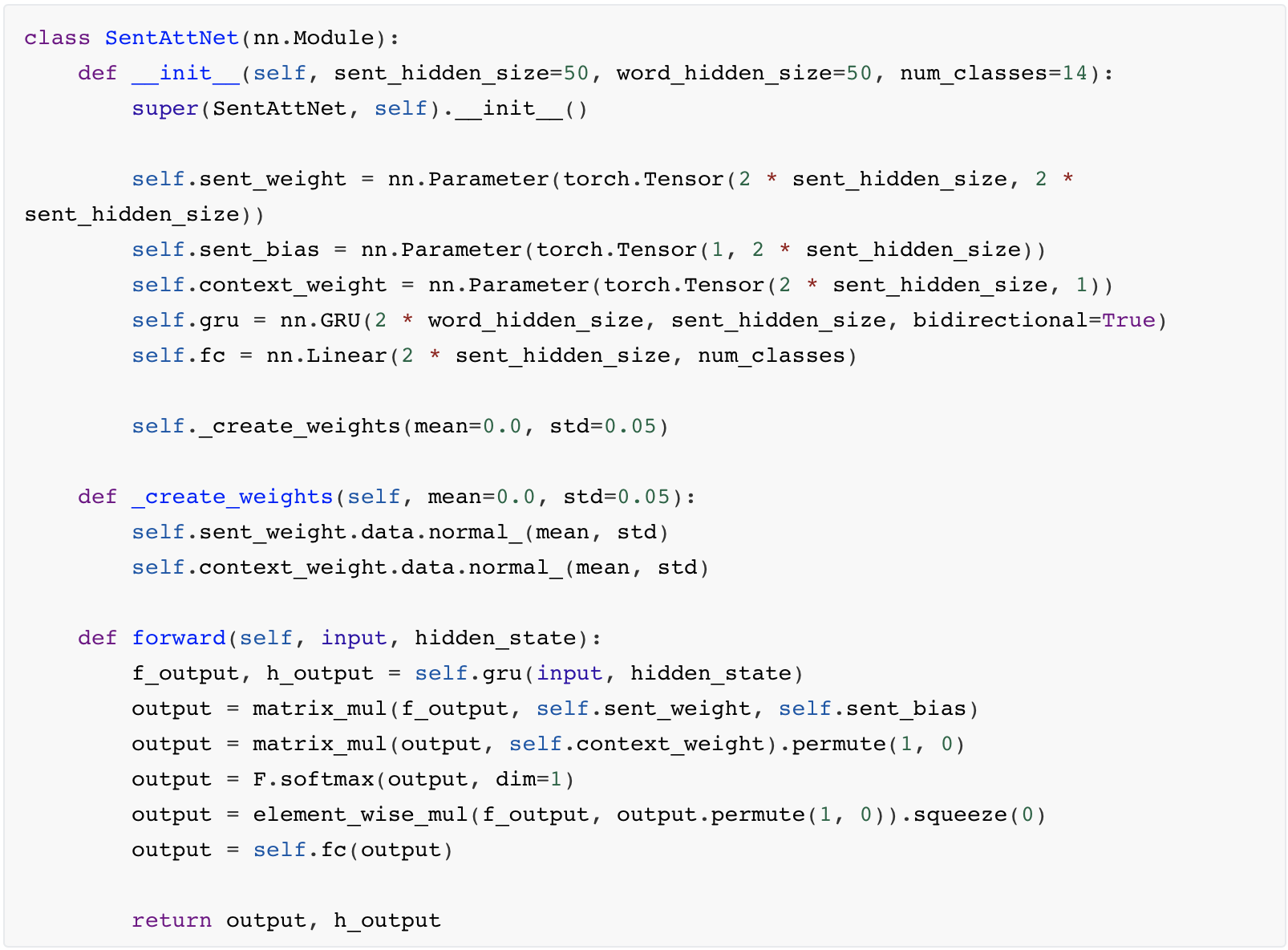

(1)The model part code

Word level attention Module.

Sentences level attention Module.

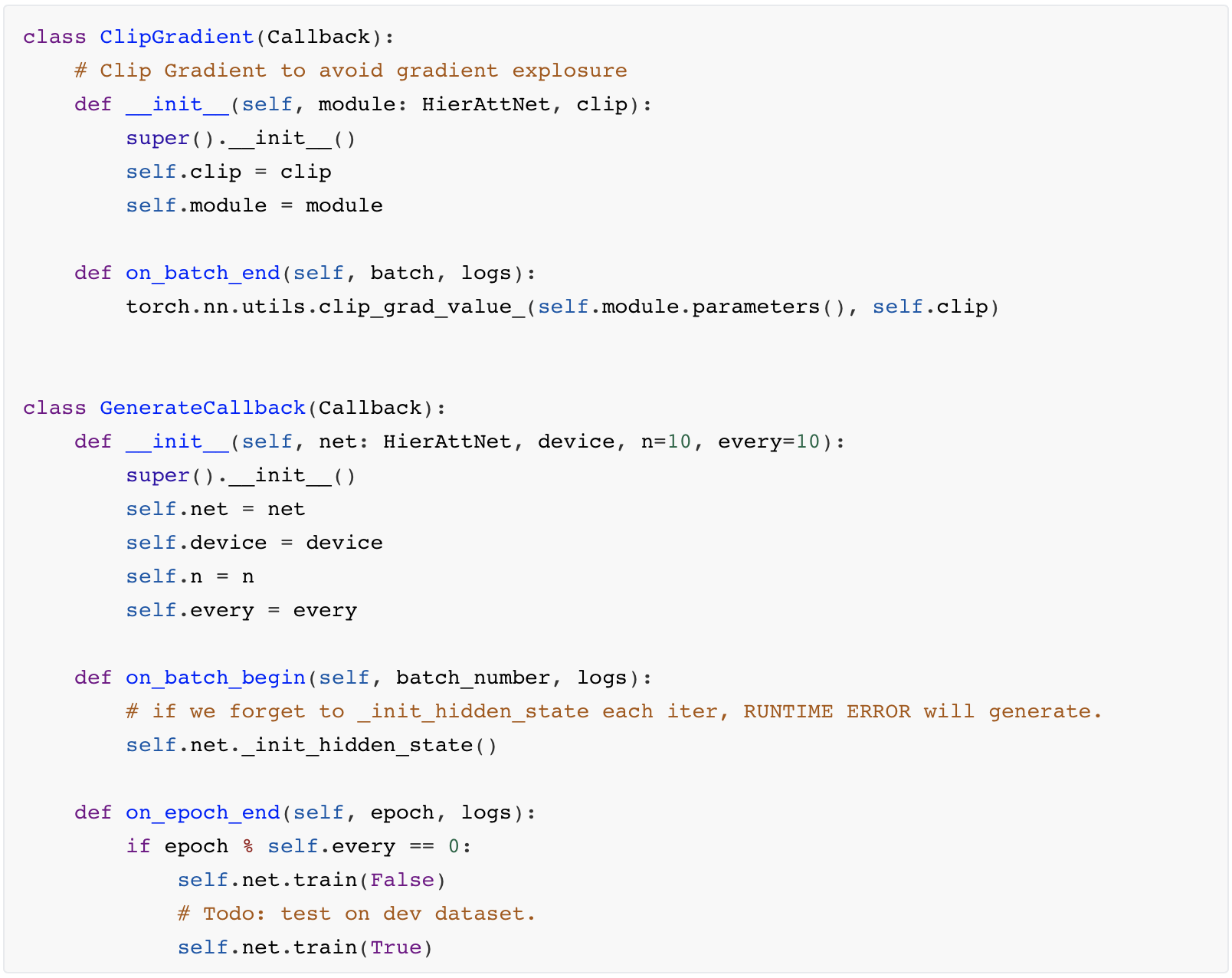

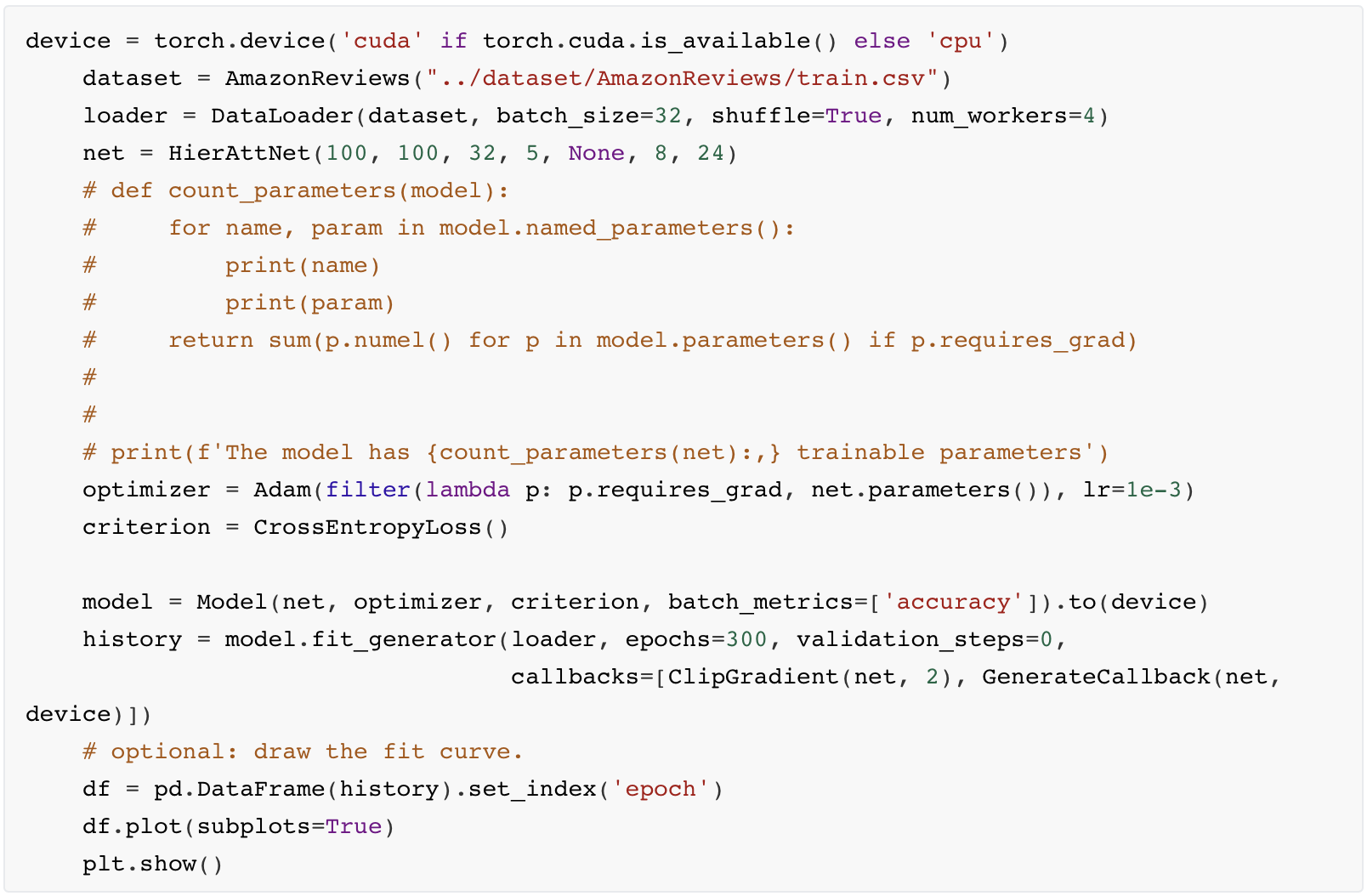

(2)Use poutyne to simplify our train code

In Main.py, we only need to do the follow things:

To do Next.

- Still, there is lots of things to do for a more perfect model.

- First Attention on padding item can be set to 0

- Second We should to do something with original dataset, since the Reviews are skewed towards positive.

- We should do some predicts on original language data, to make our model reasonable.

- Last but not Least, Use typing to make our code readable for others.